Diffusion models • Text-guided editing • Tailored applications • Local & cloud deployment

UNDERSTANDING THE TECHNOLOGY

Diffusion Models for Controlled Image Transformation

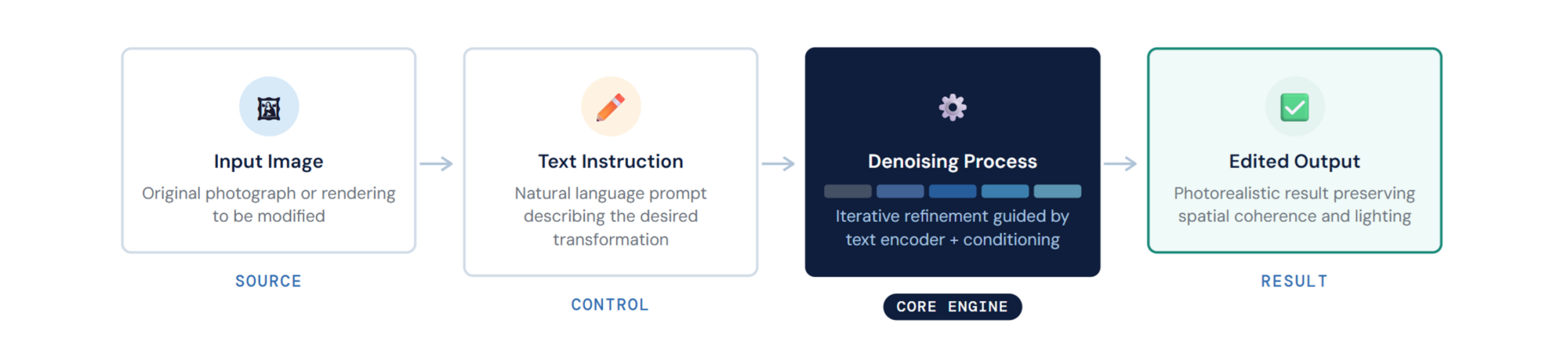

Diffusion models are a class of generative AI architectures that learn to create and modify images by iteratively refining noise into coherent visual output. Unlike earlier generative approaches, diffusion models produce high-fidelity results with fine-grained control over composition, style, and spatial structure — making them suitable for professional applications where precision matters.

When combined with text encoders (CLIP, T5) and conditioning mechanisms (ControlNet, IP-Adapter, masks), diffusion models become powerful tools for targeted image editing: modifying specific regions of a photograph, transferring materials from a reference image, compositing objects into existing scenes, or generating entirely new images from text descriptions — all while preserving the spatial coherence, perspective, and lighting of the original.

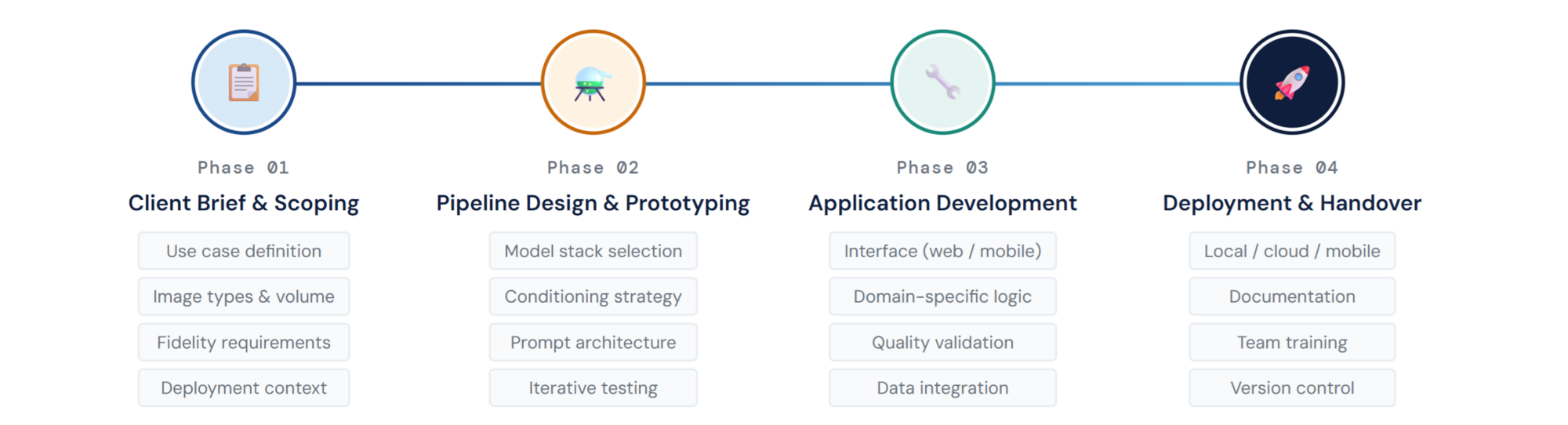

Urban Geo Analytics does not offer generic image generation. We build purpose-built pipelines where every transformation is controlled, reproducible, and adapted to a specific domain. The model, the editing logic, the prompt architecture, and the output format are all designed around the client’s use case — whether that is urban design prototyping, heritage visualisation, product configuration, or training data synthesis.

Diffusion-based image editing pipeline: a source image and natural language instruction are jointly encoded and used to guide iterative denoising, producing a high-fidelity edited output that preserves the spatial structure of the original image.

OUR APPROACH

How We Build Tailored Generative Applications

Model Selection & Pipeline Design

Every engagement begins with understanding the client’s visual transformation needs: what kind of images, what kind of edits, what level of fidelity, what throughput. We then select and configure the appropriate model stack from the full ecosystem of open-source diffusion architectures — Qwen-Image-Edit, Stable Diffusion, SDXL, Flux, and specialised variants — choosing the architecture that best fits the task.

We design modular pipelines where each stage of the generative process (encoding, conditioning, sampling, decoding) is exposed and configurable. This means the client or our team can adjust parameters, swap components, or extend the pipeline without rebuilding from scratch. Custom processing modules developed by UGA handle domain-specific operations like sequential image loading, batch iteration, context-aware conditioning, and structured output formatting.

Application Development

The core differentiator of our service is not the model itself — it is the application built around it. We develop dedicated tools for specific projects and organisations.

For an architecture firm, this might be a facade renovation visualiser where users upload a building photograph, select a material palette, and receive photorealistic renderings of the proposed change. For a municipality, it might be a streetscape greening tool that shows what a road would look like with added trees, wider sidewalks, or reduced parking. For a product company, it might be a configurator that generates product variants in context.

Each application includes its own interface (web-based, desktop, or mobile), prompt logic tailored to the domain, output quality validation, and integration with the client’s existing data and delivery systems. We handle everything from UX design through to GPU provisioning and deployment.

Field worker using a tablet to visualize streetscape modifications in real time on location (image generated by our algorithms for illustrating purposes only)

Mobile & Field Deployment

Not all image editing happens at a desk. For urban planning, site assessment, and participatory design, we develop mobile applications (Android APK) that bring diffusion-based editing directly into the field. A planner standing on a street can photograph the scene, select a transformation — add trees, widen the sidewalk, change the facade material — and see a photorealistic result on their tablet in seconds.

This real-time, on-site capability transforms stakeholder engagement: instead of presenting pre-rendered scenarios in a meeting room, practitioners can generate and discuss visual alternatives directly at the location being discussed. The same technology supports field surveys where inspectors photograph assets and generate annotated or modified versions on the spot, with results geotagged and synced to a central platform.

Batch Processing & Scale

Many use cases require applying the same transformation to hundreds or thousands of images. Our pipelines support sequential and random image loading, automated iteration over folders, and structured output with metadata. This makes it possible to, for example, generate greened versions of every street photograph in a city-wide survey, or produce material variants of every facade in a building inventory — operations that would be impractical to perform manually.

Video Generation & Dynamic Scene Simulation

Static before-and-after images answer the question of what a space will look like. They do not answer how it will feel to move through it. Image-to-video generation extends the diffusion pipeline into the time dimension: starting from a single edited frame, a video model generates a short animated sequence that brings the scene to life — animating pedestrians crossing a newly pedestrianised street, cyclists using a protected lane, vehicles navigating a reconfigured junction, or crowds gathering in a redesigned public square.

We integrate open-source image-to-video architectures into our existing pipelines. The workflow is a direct extension of image editing: the diffusion-edited scene serves as the input frame, and the video model generates motion that is consistent with the scene’s geometry, lighting, and depth. The result is a short clip, typically 3–8 seconds, that can be looped, extended, or used as a storyboard frame for longer narrative sequences.

For urban planners and mobility analysts, this opens use cases that static imagery cannot serve: evaluating how a traffic-calming intervention would affect vehicle flow, simulating pedestrian density on a widened footway, or demonstrating to stakeholders how a proposed market square would animate on a typical Saturday morning. These clips are not simulation outputs in the engineering sense — they do not replace agent-based traffic models — but they provide an intuitive, communicable visual that is immediately legible to non-specialist audiences and highly effective in consultation and engagement settings.

Image -> Image Modifications -> Video Generation (generated by our algorithms for illustrating purposes only)

APPLICATIONS

Where We Deploy Generative Image Pipelines

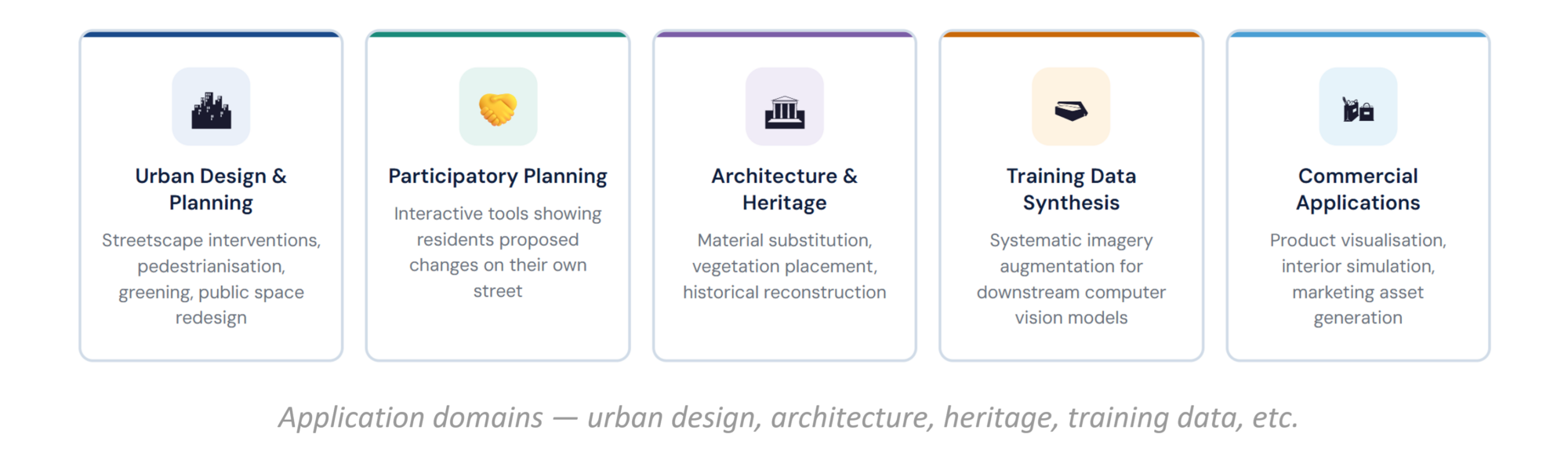

Our generative image services span a range of sectors. In urban design and planning, we build tools for visualising streetscape interventions — pedestrianisation, greening, facade renovation, public space redesign — producing photorealistic before-and-after images that support design decisions and stakeholder communication. For participatory planning, we create interactive tools that show residents what proposed changes would look like on their actual street, generating visual scenarios for public engagement workshops and consultations.

Beyond static imagery, our image-to-video pipelines add a dynamic dimension to urban proposals: edited streetscapes can be animated to show pedestrian flows, cycling activity, vehicle movements, or crowd dynamics — making proposals immediately legible to non-specialist audiences in consultation settings. In architecture and heritage, we develop pipelines for material substitution, vegetation placement, context-aware scene composition, and historical reconstruction from archival photographs. For training data augmentation, we systematically transform existing imagery to generate synthetic datasets for downstream computer vision models — a technique increasingly used when labelled training data is scarce or expensive to produce. We also serve commercial applications including product visualisation, interior design simulation, and marketing asset generation — any domain where controlled, high-quality image editing at scale creates business value.

OPEN-SOURCE FOUNDATION

Backed by Our Open-Source Pipelines

Our generative image work is informed by Diffusion Pipelines for Urban Scene Editing, an open-source collection of versioned ComfyUI workflows (v1.0–v1.3) we develop and maintain. These pipelines — from basic text-guided editing through reference-based composition, batch processing, and mask-guided inpainting — demonstrate the core capabilities we deploy in production for clients.

Source code: https://github.com/perezjoan/ComfyUI-QwenEdit-Urbanism-by-UGA

See: Sofware & Algorithms › Diffusion Pipelines

DEPLOYMENT

How We Deliver

Deployment options range from fully local installations (the entire pipeline runs on the client’s hardware with no external dependencies) to cloud-hosted services with API endpoints and web frontends. For field use, we package pipelines as Android applications with on-device or edge-server inference. For organisations that require data privacy, all processing happens on-premise — no images are sent to external servers. For organisations that need scale, we deploy on cloud GPUs with autoscaling and batch queuing. In every case, the client receives a documented, reproducible system with version-controlled workflows, clear parameter documentation, and training materials for their team.

Have a project that needs generative image capabilities? Get in touch.