Image analysis at scale • Multi-model deployment • Custom prompts • Real-time & batch

UNDERSTANDING THE TECHNOLOGY

Vision-Language Models for Image Analysis

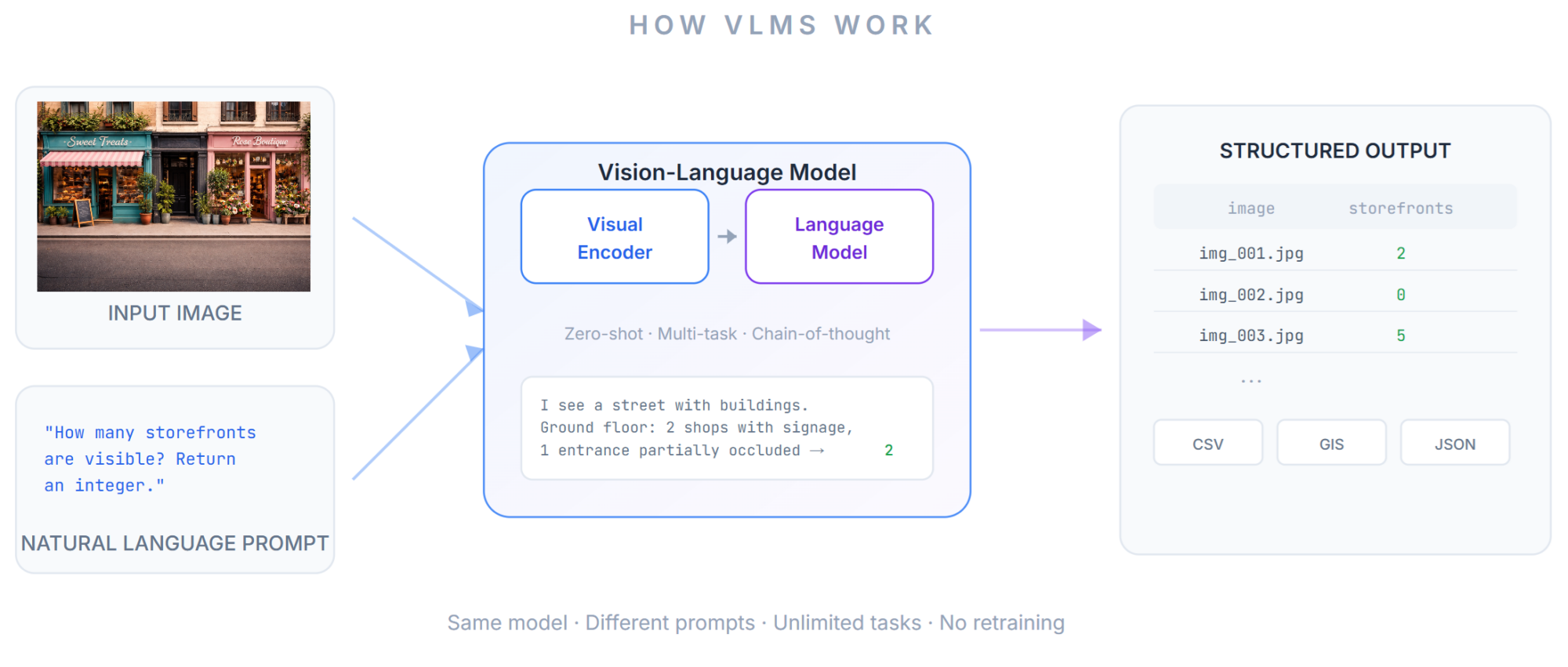

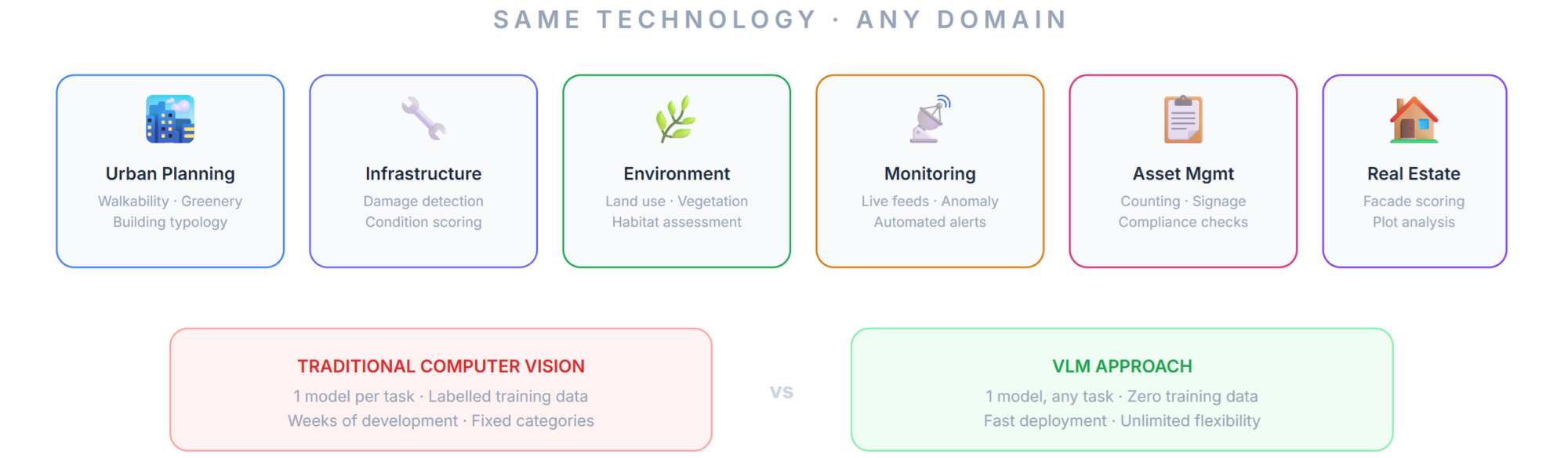

Vision-Language Models (VLMs) are a class of multimodal AI architectures that combine a visual encoder with a large language model decoder. Unlike traditional computer vision systems that detect predefined objects or classes, VLMs can interpret images and respond to arbitrary natural language queries about their content — counting objects, classifying scenes, estimating measurements, detecting features, and reasoning about spatial relationships.

This flexibility makes VLMs a powerful tool for applied image analysis. A single model can perform dozens of different analysis tasks on the same image simply by changing the text prompt, with no retraining, no labelling, and no task-specific model development. For organisations that need to extract structured data from large image datasets — urban surveys, site inspections, environmental assessments, fleet monitoring — VLMs offer a step change in efficiency and scalability.

- Zero-shot flexibility. New tasks defined through natural language prompts, not model retraining.

- Domain knowledge injection. Text prompts encode domain rules, edge cases, and classification schemas directly.

- Multi-task analysis. Run multiple tasks per image in a single pass, from counting to classification to measurement.

- Interpretable reasoning. Built-in language output provides explanations of decisions, not just labels.

- Scalable batch processing. Structured CSV output feeds directly into GIS, statistical software, and databases.

OUR APPROACH

How Urban Geo Analytics Deploys VLMs

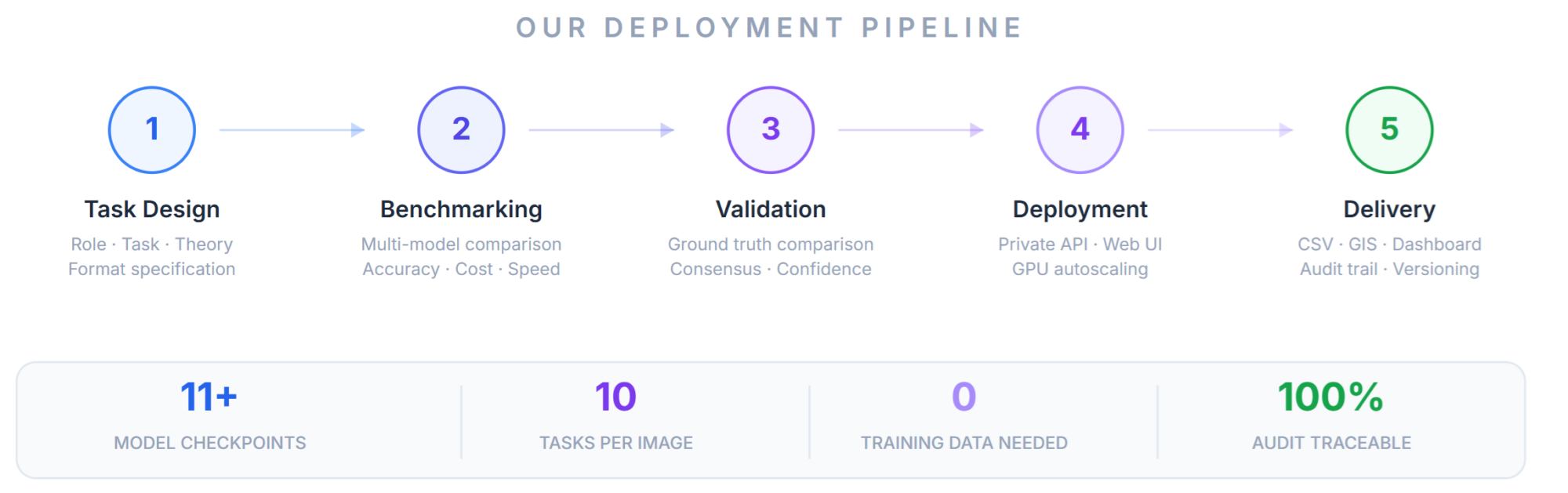

Urban Geo Analytics (UGA) designs and deploys VLM-based image analysis pipelines for clients who need to extract structured, quantitative data from large collections of photographs. Our deployments cover the full stack — from model selection and prompt engineering through to production infrastructure and result delivery.

Prompt Engineering & Task Design

The quality of VLM output depends critically on prompt design. UGA develops modular prompt architectures structured as four components: a role instruction (defining the model’s analytical persona), a task description (the specific question), a theory section (domain knowledge, classification rules, and edge cases), and a format specification (expected output structure). This approach ensures consistent, parseable results across thousands of images.

We design prompts iteratively against expert-annotated ground truth, measuring accuracy, bias, and inter-model agreement at each stage. For complex tasks — such as estimating distances from photographs or classifying vegetation typologies — we implement chain-of-thought reasoning strategies where the model explains its observations step by step before producing a final answer.

Model Selection & Benchmarking

Different VLM families excel at different tasks. UGA benchmarks candidate models on client-specific datasets before committing to a production deployment. We work across the full spectrum of available architectures — including LLaVA-NeXT, Qwen2.5-VL, InternVL, BLIP-2, DeepSeek-VL, CogVLM, and emerging models — selecting the best fit for each project based on task requirements, accuracy, and computational constraints.

Our benchmarking protocol evaluates each model on the exact tasks and prompts that will be used in production, measuring proximity scores, parsing reliability, truncation rates, and computation cost. This evidence-based approach ensures that the deployed model is the best fit for the client’s specific requirements, rather than relying on generic leaderboard rankings.

Production Infrastructure

For clients who need VLM analysis at scale, UGA deploys production-grade infrastructure. This includes private API deployment on cloud or on-premise GPU servers where data never leaves the client’s security perimeter; custom web frontends with image upload, task configuration, batch monitoring, and result exploration with role-based access control; GPU autoscaling for high-throughput batch processing with checkpointing and error recovery; and structured output integrated directly with GIS platforms, databases, and downstream analytics pipelines.

We also deploy VLM inference on live video feeds with configurable analysis loops — frame sampling, continuous monitoring, anomaly detection, and automated alert generation. Applications include aerial surveillance, live infrastructure monitoring, and real-time situational awareness for field operations. Full audit logging, model versioning, and prompt version control ensure traceability from input image to final output value.

APPLICATIONS

Where We Apply VLM Analysis

While our roots are in urban morphology and streetscape analysis, VLM technology is domain-agnostic. UGA deploys VLM solutions for urban planning and streetscape scoring (walkability, greenery, building typology, pedestrian infrastructure at city scale), infrastructure inspection (damage detection, crack counting, condition scoring), environmental monitoring (land use classification, vegetation cover, habitat assessment), real-time monitoring and surveillance (continuous analysis on live feeds from fixed cameras, vehicle-mounted systems, or aerial platforms), asset management (counting, reading signage, classifying equipment, assessing compliance from site photographs), and real estate analysis (facade classification, plot characterisation, neighbourhood-scale morphological assessment).

OPEN-SOURCE FRAMEWORK

UVLM: Our Open-Source VLM Toolkit

Our VLM deployment expertise is built on UVLM (Universal Vision-Language Model Loader), an open-source framework we developed and maintain. UVLM provides a unified interface for loading, configuring, and benchmarking multiple VLM architectures on custom image analysis tasks — abstracting away all architecture-specific code behind a single inference function.

UVLM is distributed as a Google Colab notebook under the Apache 2.0 license, freely available for researchers and practitioners who want to run their own VLM evaluations. It currently supports 11 model checkpoints across the LLaVA-NeXT and Qwen2.5-VL families (from 3B to 110B parameters), with dual-backend abstraction, a multi-task prompt builder, consensus validation (2–5 runs), flexible reasoning support (up to 1,500 tokens), truncation detection, and resume-safe batch execution.

Source code: https://github.com/perezjoan/UVLM

Publication: Perez, J. and Fusco, G. (2026). UVLM: A Universal Vision-Language Model Loader for Reproducible Multimodal Benchmarking. arXiv preprint.

UVLM demonstrates our deep understanding of VLM architectures, inference pipelines, prompt engineering, and evaluation methodology. The same expertise that produced this open-source toolkit powers the production deployments we build for clients.

WORKING WITH US

Deployment Options

UGA offers flexible engagement models depending on project scope and client capabilities. At the consulting level, we provide model selection, prompt design, and benchmarking on your dataset, delivered as a report with recommended configuration and validated accuracy metrics. For managed API deployment, we set up a private REST API on dedicated GPU infrastructure with authentication, rate limiting, logging, and both single-image and batch endpoints hosted on your cloud or ours. Full platform engagements include a custom web frontend with image upload, task configuration, batch monitoring, result exploration, role-based access, map integration for geo-referenced datasets, and export to GIS formats. For embedded integration, we build VLM analysis directly into your existing software platform or data pipeline through SDK, webhook, or message-queue integration, with on-premise deployment for sensitive data. Finally, we offer a training and transfer model where we build the system, document it, train your team, and hand it over with ongoing support as needed.

Ready to deploy VLM-powered image analysis? Get in touch.