What is it?

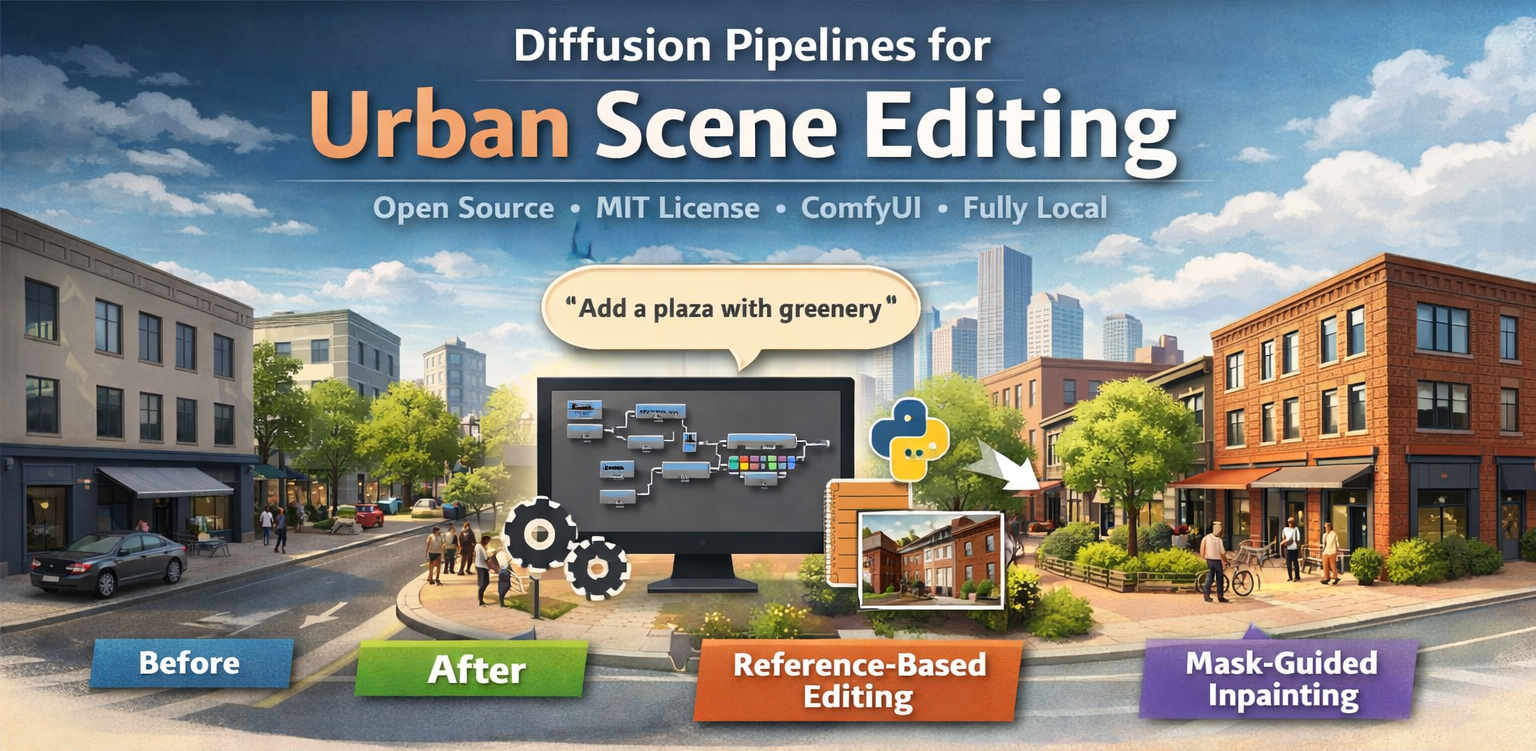

This project provides a set of open-source, fully local workflows for text-guided transformation of architectural and urban scenes. Built on ComfyUI and diffusion-based image editing models (currently Qwen-Image-Edit in GGUF format), the pipelines allow natural-language manipulation of urban imagery — without any reliance on cloud APIs, external GPUs, or proprietary services.

Users can modify streets, façades, and public spaces through text prompts: adding vegetation, changing building materials, converting roads to pedestrian plazas, or compositing elements from reference images. Everything runs offline, ensuring data privacy, reproducibility, and fine-grained control over every transformation.

The project is distributed as a collection of versioned ComfyUI workflow files plus custom Python nodes developed by Urban Geo Analytics. Each version introduces new capabilities — from basic single-image editing to reference-based composition, sequential batch processing, and mask-guided inpainting.

Versions & Capabilities

Step v1.0: Single-image editing. Basic text-guided editing with automatic aspect ratio adaptation. Prompt examples: “Add trees along the sidewalk”, “Turn this street into a pedestrian plaza.”

Step v1.1: Reference-based editing. Edit scenes using a second image as material or style reference. Example: “Change the walls to the brick material from image 2.”

Step v1.2: Sequential and batch edits. Custom Python nodes for automated iteration over image folders. Apply the same transformation to hundreds of urban photographs.

Step v1.3: Mask-guided inpainting. Localised editing with spatial control. Paint a mask region and composite elements from a reference image into that area. Example: “Add the cyclist from image 2 into the mask region.”

Illustrations

v1.0

Prompt: “add a tree in the middle of the street”

v1.1

Prompt: “changes the walls of the house in image 1 by the brick wall material of image 2”

v1.2

Sequential and Batch Edits

v1.3

Edit with a Mask: “add cyclist from image 2 to the mask in image 1”

Key Features

- Text-driven urban editing. Modify streets, façades, vegetation, and public spaces through natural language prompts.

- Reference-based composition. Use a second image as a material, style, or object source for context-aware transformations.

- Mask-guided inpainting. Spatially targeted edits: define where in the scene to apply the transformation.

- Batch processing. Custom Python nodes for sequential iteration over image folders. Scale edits to entire datasets.

- Fully local and private. Runs entirely on your machine. No cloud APIs, no remote inference, no data leaves your system.

- ComfyUI integration. Modular node-based workflows. Import, customise, and extend through the ComfyUI interface.

Applications

- Urban design prototyping. Rapid visualisation of streetscape interventions — greening, pedestrianisation, façade renovation — before any physical change.

- Participatory planning. Generate visual scenarios for public consultation. Show residents what proposed changes would look like on their street.

- Architectural visualisation. Material substitution, vegetation placement, and context-aware scene composition for design presentations.

- Dataset augmentation. Generate training data for downstream computer vision models by systematically transforming existing urban imagery.

Download & Installation

- Download and install the git version of ComfyUI with ComfyUI Manager, or the standalone ComfyUI (already includes the Manager).

- Open the desired workflow and when prompted by the manager install the missing nodes. Then, follow the Qwen-Edit-UGA tutorials to download and place the necessary checkpoints, VAE, CLIP, and other model files inside your ComfyUI/models directory.

- To directly add our custom nodes (required from v1.2), navigate to your ComfyUI directory: ComfyUI/custom_nodes/ and clone or download our repository and put it in your custom_nodes directory

git clone https://github.com/perezjoan/ComfyUI-QwenEdit-Urbanism-by-UGA.git - Restart ComfyUI

The nodes will appear under image/sequence and image/random categories.

Tutorials & Workflow Downloads

Open ComfyUI → File → Load → Workflow and choose the version file to import it into your workspace.

| Version | Description | Tutorial | Download |

|---|---|---|---|

| v1.0 | Basic Qwen Image Edit workflow for single-image editing. Adapts automatically to input ratio and size. | Link | Link |

| v1.1 | Adds image editing from a reference image and advanced sampling capabilities for complex scenes. | Link | Link |

| v1.2 | Sequential, batch edits & custom nodes | Link | Link |

| v1.3 | Edit with a mask & a reference image | Link | Link |

Credits

Developed by Urban Geo Analytics (UGA)

Based on open-source work by QuantStack, ComfyUI, and Qwen Image Edit contributors.

🔗 GitHub Repository: github.com/perezjoan/ComfyUI-QwenEdit-Urbanism-by-UGA

🪪 License: MIT

© 2025 Urban Geo Analytics — All rights reserved.